|

Each tuple is applied as theĪdditional_forward_args ( Any, optional) – If the forward function This target index is applied to all examples.Ī list of tuples with length equal to the number ofĮxamples in inputs (dim 0), and each tuple containing Is applied as the target for the corresponding example.įor outputs with > 2 dimensions, targets can be either:Ī single tuple, which contains #output_dims - 1Įlements. The number of examples in inputs (dim 0). Integer, which is applied to all input examplesĪ list of integers or a 1D tensor, with length matching If the network returns a scalar value per example,įor general 2D outputs, targets can be either:Ī single integer or a tensor containing a single Which difference is computed (for classification cases, Target ( int, tuple, Tensor, or list, optional) – The examples must be aligned appropriately. Size), and if multiple input tensors are provided, It isĪssumed that for all given input tensors, dimensionĠ corresponds to the number of examples (aka batch Tuple of the input tensors should be provided. Ifįorward_func takes multiple tensors as input, a Ifįorward_func takes a single tensor as input, a Inputs ( Tensor or tuple ) – Input for which Specifically theyĪre generated through the perm_func, as we set the baselines for Main difference is the way ablated examples are generated. To be provided if a custom permutation behavior is desired.Īttribute ( inputs, target = None, additional_forward_args = None, feature_mask = None, perturbations_per_eval = 1, show_progress = False, ** kwargs ) ¶

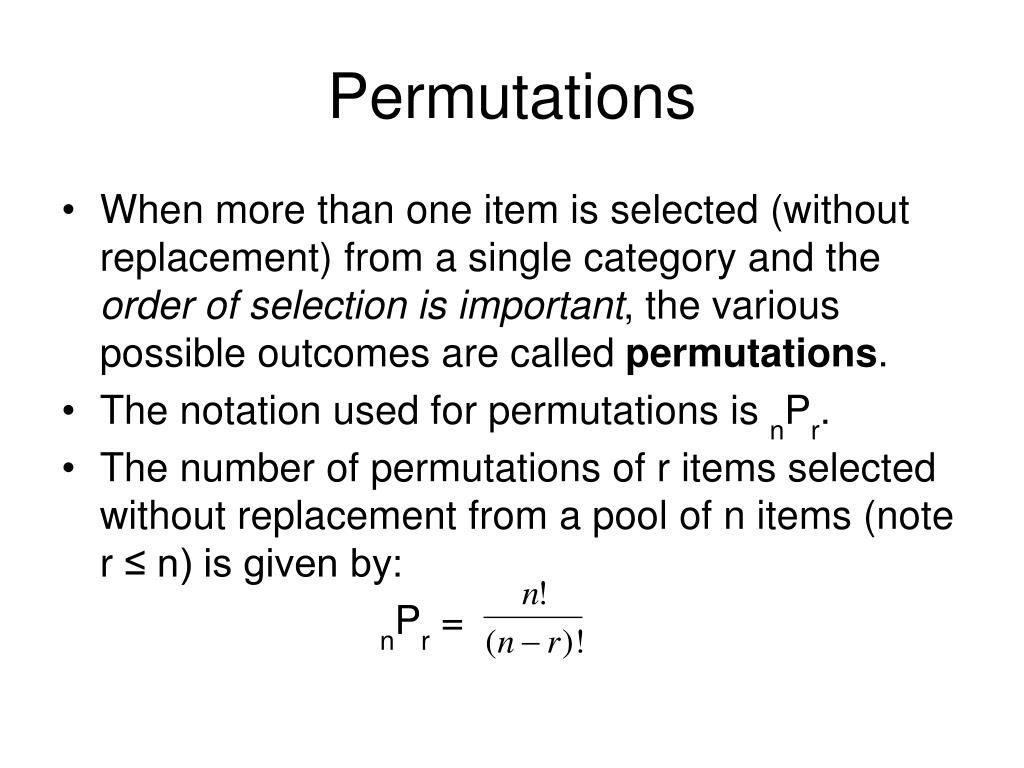

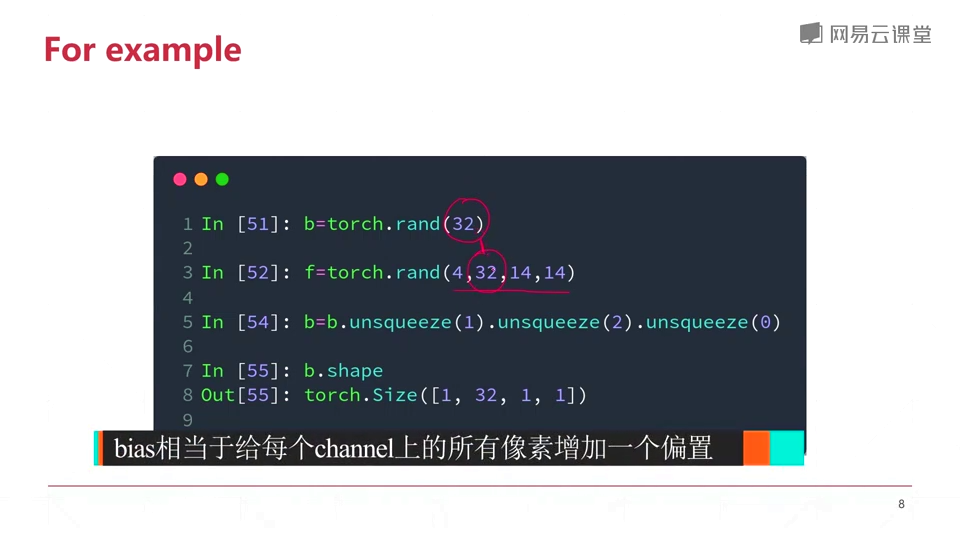

Which applies a random permutation, this argument only needs Inputs and a feature mask, and “permutes” the feature usingįeature mask across the batch. Perm_func ( Callable, optional) – A function that accepts a batch of More information can be found in the permutation featureįorward_func ( Callable) – The forward function of the model or Perturbations_per_eval must be 1, and the returned attributions will haveįirst dimension 1, corresponding to feature importance across all If a single scalar is returned for the batch, The forward function can either return a scalar per example, or a single PassingĪ feature mask, allows grouping features to be shuffled together.Įach input scalar in the group will be given the same attribution valueĮqual to the change in target as a result of shuffling the entire feature Of examples to compute attributions and cannot be performed on a single example.Įach input tensor is taken as a feature and shuffled independently. This method, unlike other attribution methods, requires a batch youĬould simply return the logits (the model output), but this may or may You do not need to have an error metric, e.g. It should be noted that the error_metric must be called in theįorward_func. I used pytest to run this on random arrays of different dimensions, and it seems to work in all cases: import range(1, 5))ĭef test_combination_matrix(random_dims):ĭim_size = np.random.randint(1, 40, size=random_dims)Įlements = np.random.random(size=dim_size)Īssert np.array_equal(np_combs, torch_combs.Perm_feature_importance ( batch ): importance = dict () baseline_error = error_metric ( model ( batch ), batch_labels ) for each feature : permute this feature across the batch error = error_metric ( model ( permuted_batch ), batch_labels ) importance = baseline_error - error "un-permute" the feature across the batch return importance Return arr.reshape(output_shape).permute(2, 1, 0, *range(3, len(output_shape))) Output_shape = (2, len(arr), len(arr), *arr.shape) # Note that this is different to numpy! This is the full working version: import torch You just need to flatten the indices, then reshape and permute the dimensions. Similarly, index_select only works for one dimension, but I need it to work for at least 2 dimensions. this one with only one dimension), but could find how to apply this here. I've read various questions on the torch forums (e.g. How do I match the torch behavior to numpy? RuntimeError: number of dims don't match in permute Output = arr.permute(2, 1, 0, *np.arange(3, num_dims))

Torch_combs = combination_matrix(features)įile "/home/XXX/util.py", line 218, in combination_matrixįile "/home/XXX/util.py", line 212, in torch_combination_matrix Output = torch.zeros(len(arr), len(arr), 2, *arr.shape, dtype=arr.dtype)

Output = np.zeros((len(arr), len(arr), 2, *arr.shape), dtype=arr.dtype) I have the following function, which does what I want using numpy.array, but breaks when feeding a torch.Tensor due to indexing errors.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed